Surface Realisation Shared Task 2020

July - October 2020

SR'20 Legacy task

- The general objective of the Surface Realisation Shared Task is to compare approaches to surface realisation using common datasets based on semantic and syntactic structured annotations. The results of this third Multilingual Surface Realisation Shared Task (SR'20) and the participating systems will be presented during the Third Multilingual Surface Realisation Workshop @COLING 2020.

The task is endorsed by the ACL Special Interest Group on Natural Language Generation (SIGGEN).

Task

- This year's task uses the same training, validation and in-domain test sets as the SR’19 Shared Task, with the same data and resource restrictions (see section on Data below). However, this year’s shared task differs in two respects: (i) the addition of an "open" mode in each track, where there are no restrictions on the resources that can be used, and (ii) the introduction of new evaluation datasets.

- As in previous years, the goal of the shared task is to generate a well-formed sentence given the input structure, and there are two tracks with different levels of complexity:

- Shallow Track (Track 1): This track starts from vanilla UD structures in which word order information has been removed and tokens have been lemmatised, i.e. the inputs are unordered dependency trees with lemmatised nodes that contain PoS tags and morphological information as found in the original annotations. The task is equivalent to determining the word order and inflecting the words. As indicated above, there will be both a closed (T1a) and an open (T1b) subtrack.

- Deep Track (Track 2): This track starts from UD structures from which functional words (in particular, auxiliaries, functional prepositions and conjunctions) and surface-oriented morphological information have been removed. In addition to what has to be done for the Shallow Track, the Deep Track thus involves introducing the removed functional words and morphological features. Again, there will be a closed (T2a) and an open (T2b) subtracks.

- Participating teams can choose to address one or both of the two shared task tracks T1 and T2. However, in their chosen track(s), teams are required to submit outputs for the closed mode(s) (T1a and T2a). In addition, teams can choose to also submit to the open mode(s) (T1b and T2b). For instance, if you are mainly interested in the T1b task, you are required ed to also provide outputs for T1a, where you train your models with the provided data resources plus any of the specifically allowed additional resources.

- Recommended papers and links:

- Dataset: Mille et al. (INLG'18)

- Overview and results: SR'18, SR'19, SR'20

- High-scoring systems: Elder et al. (ACL'20), Yu et al. (ACL'20)

- NEW: SR20 system descriptions: MSR workshop proceedings (COLING'20)

- NEW: SR20 submissions: Download

Registration

↑-

In order to register for the task, please send an email to msr.organizers@gmail.com with your name and affiliation. Note that the data is available for direct download from the Generation Challenges (GenchChal) repository, but please don’t forget to also register with us via the above email address. Teams must be registered in order to be entered in the human evaluations (please note that we cannot guarantee we will be able to run human evaluations for all SR’20 languages). There is no obligation to submit results at the end of the process, and we do not disclose the identity of the registered teams.

Important Dates

-

12 July 2020: Registration for the task open

12 July 2020 : Training and development sets released, evaluation scripts released

25 September 2020 : Test sets released

12 December 2020 : Presentation of results and systems @MSR workshop

Information

-

04/12

- The link to access the presentations and to attend the workshop is now available: Underline. The programme is available on the MSR workshop page.

- Due to exceptional circumstances, the deadlines for submissions and the human evaluation have been postponed.

- The test sets were released! Please register to the task to receive the data.

- The deadline for registration to the task has been extended to October 1! Note that we might not be able to ensure the participation to human evaluation in case of late registration.

- The tool for producing T1 and T2 structures from UD structures is now available on GitLab.

- The shared task has been publicly announced and is open!

- The shared task will be launched soon.

Data

↑-

Training and development data: You can download the data, the evaluation scripts and more from the Generation Challenges repository . The compressed folder contains (i) the original UD datasets for 11 languages as they can be found on the UD page (20 + 20 files); (ii) the Track 1 datasets (20 + 20 files); (iii) the Track 2 datasets (9 + 9 files); (iv) statistics about all the datasets (98 files). Further instructions regarding how to use the evaluation scripts are provided in the evaluation section below. Please register before

Conversion tool: You can download the tool that converts CoNLL-U structures to T1 and T2 inputs from GitLab.

- The data used is the Universal Dependency treebanks V2.3, that is, the datasets released after the completion of the CoNLL'18 shared task on Multilingual Parsing from Raw Text to Universal Dependencies (V2.2). The inputs to the Shallow and Deep Tracks are distributed in the 10-column CoNLL-U format, and with the same licenses are the data they come from (see UD page).

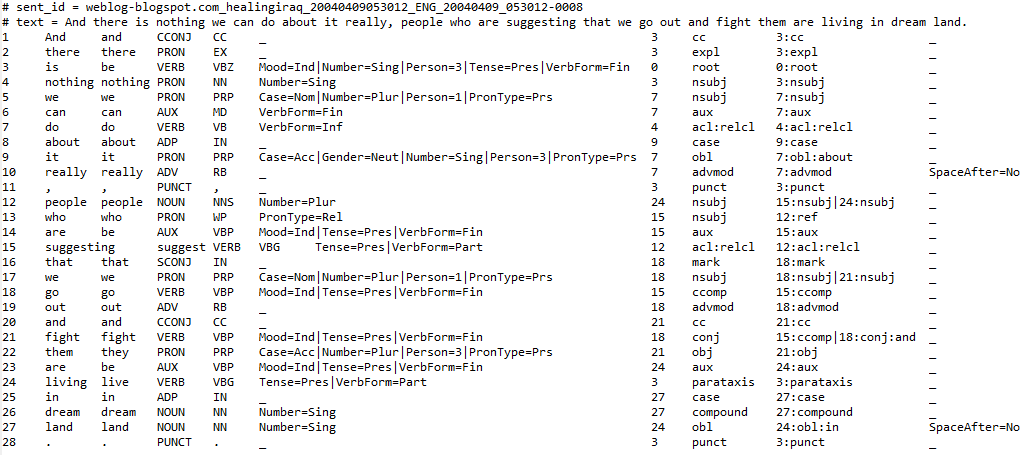

- For the creating the input to the Shallow Track, the UD structures were processed as follows:

- The information on word order was removed by randomised scrambling.

- The words were replaced by their lemmas.

- Two features were added to store information about (i) relative linear order with respect to the governor (lin), and (ii) alignments with the original structures (original_id, in the training data only).

- Languages: Arabic, Chinese, English (4 datasets), French (3), Hindi, Indonesian, Japanese, Korean (2), Portuguese (2), Russian (2) and Spanish (2).

-

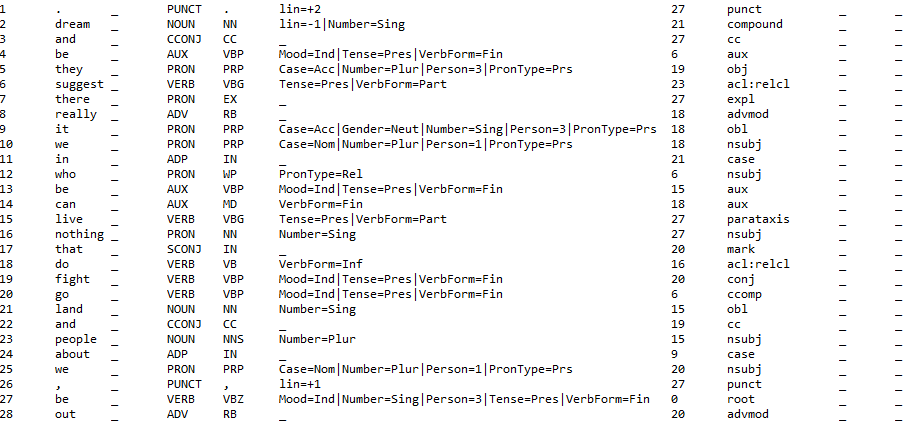

For the Deep Track, additionally:

- Functional prepositions and conjunctions that can be inferred from other lexical units or from the syntactic structure were removed.

- Determiners and auxiliaries are replaced (when needed) by attribute/value pairs, as, e.g., Definiteness, Aspect, and Mood.

- Edge labels were generalised into predicate argument labels in the PropBank/NomBank fashion.

- Morphological information coming from the syntactic structure or from agreements was removed.

- Fine-grained PoS labels found in some treebanks were removed, and only coarse-grained ones were maintained.

- Languages: English (4 datasets), French (3) and Spanish (2).

Informal documentation, with details on how the UD relations were mapped to predicate-argument relations, alignments between files, etc.: Open

-

List of explicitly allowed additional resources for the closed subtracks:

- Word2vec, including branches such as Word2veccf

- ELMo

- BERT

- GPT-2

- polyglot

- GloVe

- UD parsers such as UUParser

- The above models can be fine-tuned if needed using publicly available datasets such as WikiText and the DeepMind Q&A dataset

- Example

↑ The structures for Track 1 and 2 are connected trees; the data has the same columns as the original CoNLL-U format; however, for the SR'19, the reference sentences can be found in the original UD data. The goal of both tasks is, for each input structure, to get as close as possible to the reference sentence.

-

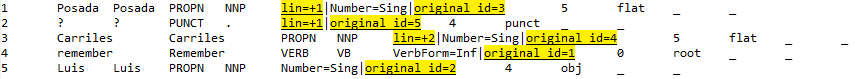

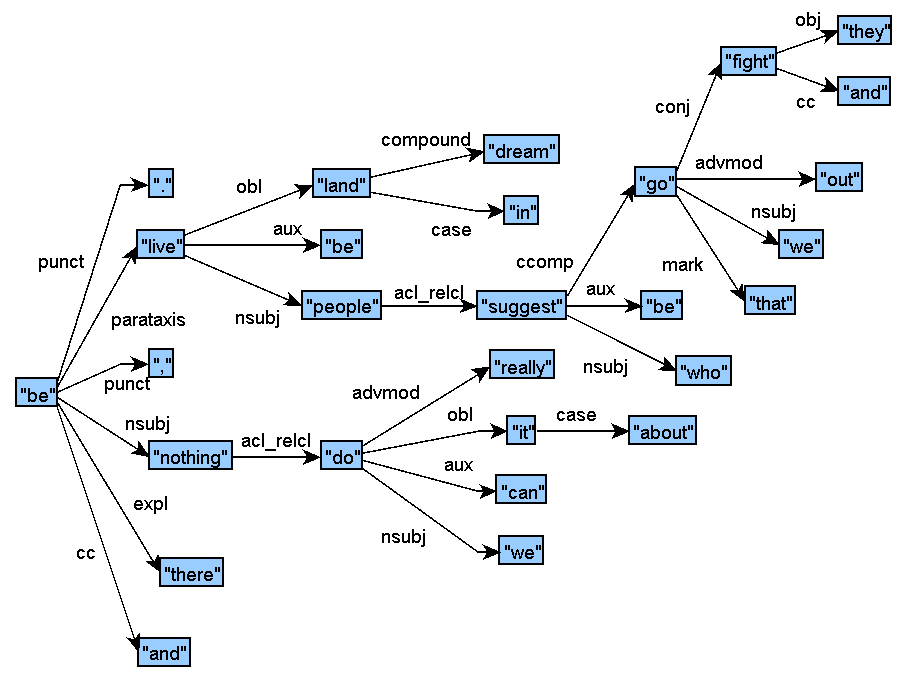

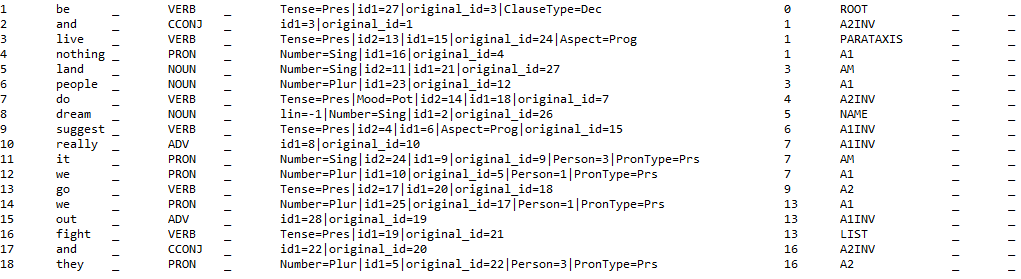

Sample original CoNLL-U file for English:

-

Track 1 training sample for English (CoNLL-U):

-

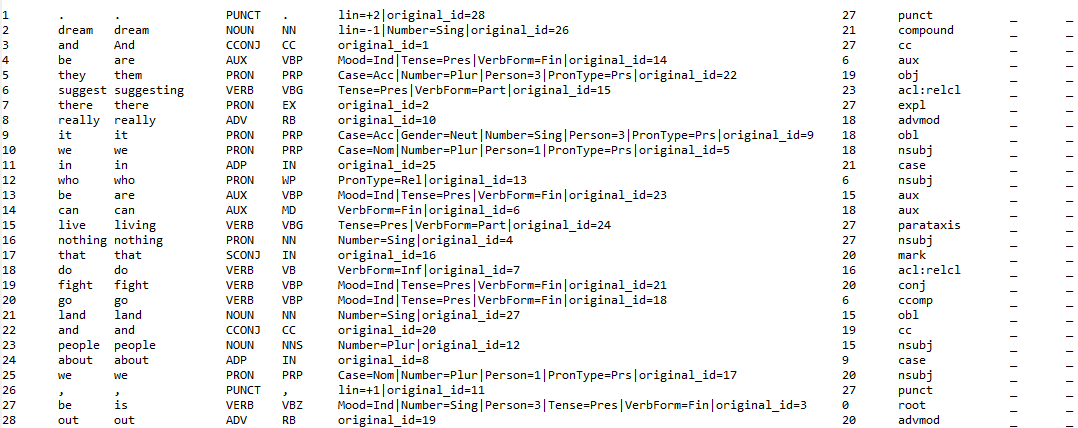

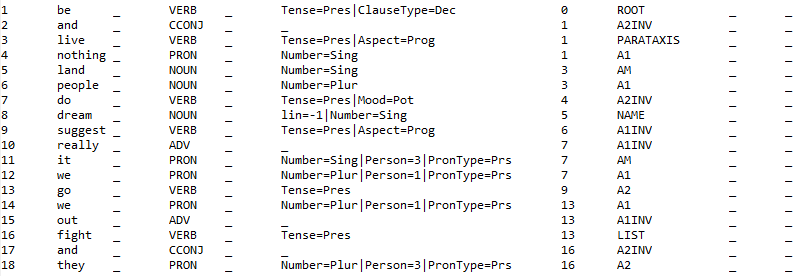

Track 1 input (dev/test) sample for English (CoNLL-U): the alignments with the surface tokens are not provided.

-

Track 1 sample structure for English (graphic):

-

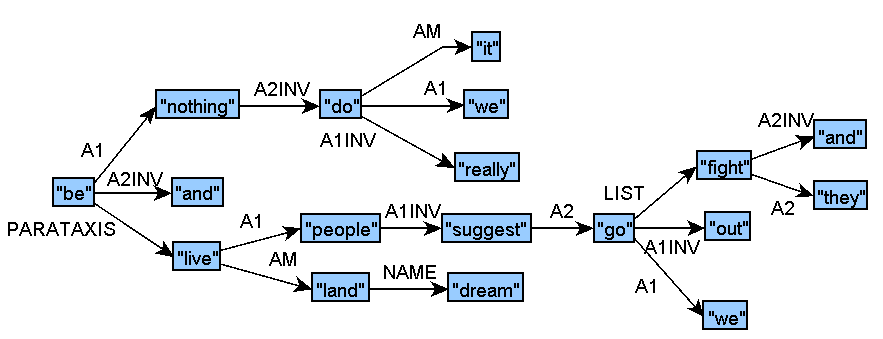

Track 2 training sample for English (CoNLL-U):

-

The nodes of the Track 2 structures are aligned with the nodes of the Track 1 structures through the attributes id1, id2, id3, etc. Each node can correspond to 0 to 6 superficial nodes; multiple node correspondences involve in particular dependents with the auxiliary, case, det, or cop relations.

-

Track 2 input (dev/test) sample for English (CoNLL-U): the alignments with the surface and Track 1 tokens are not provided.

-

Track 2 sample structure for English (graphic):

Evaluation

↑-

Once the evaluation results are available, each team will be asked if the results of their system can be released publicly, and if not, will have the possibility to anonymise their team name, which implies not publishing a system description paper.

- BLEU: precision metric that computes the geometric mean of the n-gram precisions between generated text and reference texts and adds a brevity penalty for shorter sentences. We use the smoothed version and re-port results for n= 1,2,3,4;

- NIST: related n-gram similarity metric weighted in favour of less frequent n-grams which are taken to be more informative;

- BERTScore: token similarity metric using contextual embeddings;

- Normalised edit distance: inverse, normalised, character-based string-edit distance that starts by computing the minimum number of character inserts, deletes and substitutions(all at cost 1) required to turn the system output into the (single) reference text.

For the evaluation, as last year, we will ask for both tokenised (for automatic evaluation) and detokenised (for human and possibly automatic evaluation) outputs. If no detokenised outputs are provided, the tokenised files will be used for human evaluation. Inputs from both in-domain and out-of-domain data will be found in the evaluation material.

For human evaluation, which is the primary evaluation method, we will use a method called Direct Assessment (Graham et al., 2016). Candidate outputs will be presented to human assessors who rate their (i) meaning similarity (relative to a human-authored reference sentence) and (ii) readability (no reference sentence) on a 0-100 rating scale. Systems will be ranked according to average ratings.

For the automatic evaluation, we will compute scores with the following metrics:

For each metric, we calculate (i) system-level scores (if the metric permits it), and (ii) the mean of the sentence-level scores. Output texts are normalised prior to computing metrics by lower-casing all tokens, removing any extraneous whitespace characters and ensuring consistent treatment of ampersands.

For a subset of the test data we may obtain additional alternative realisations via Mechanical Turk for use in the automatic evaluations.

For a subset of the test data we may carry out a new form of human evaluation, based on expert judgements (rather the ‘general reader’ judgements we get from Direct Assessment).

In addition to the test sets provided to participating teams, we will also provide, near the end of the development period, additional test sets for all languages. The source and nature of these test sets will be revealed nearer the time.

-

Running the evaluation scripts

- BLEU, NIST, DIST (Compatible with Python 2 and Python 3)

- BERTScore (V.0.3.5, Python 3)

- Create virtual environment for python: 'virtualenv nx'.

- Activate the virtual environment: 'source nx/bin/activate'.

- Installing NLTK: 'pip install -U nltk'.

- Command line: python eval_Py2.py [system-dir] [reference-dir].

- Use eval_Py3.py instead of eval_Py2 if you use Python 3.4 or above.

- Installing NLTK: 'pip install -U nltk'. In order to use pip, you may need to navigate to the directory that contains pip.exe through the command line before running the command.

- Command line: eval_P2.py [system-dir] [reference-dir].

- Use eval_Py3.py instead of eval_Py2 if you use Python 3.4 or above.

The evaluation scripts can be downloaded here:

Requirements: Python 2.7 or 3.4 and NLTK. For a clean environment virtualenv can be used:

Mac OS / Ubuntu

Windows

The [system-dir] folder contains the output of the system and the [reference-dir] folder contains the reference sentences. The evaluation script uses each file found in the system director [system-dir] to look up a file with the same name in the reference directory and applies BLEU, NIST and normalised edit distance to it.

Note that in some cases, there are problems getting the correct NIST score with Python 3, so it is recommended to use the Python 2 script.

Submission

↑-

Submission of the results

- tokenised sample (Spanish dev example): #text = Elías Jaua , miembro del Congresillo , considera que los nuevos miembros del CNE deben tener experiencia para " dirigir procesos complejos " .

- detokenised sample (Spanish dev example) : #text = Elías Jaua, miembro del Congresillo, considera que los nuevos miembros del CNE deben tener experiencia para "dirigir procesos complejos".

The participating teams should send a .zip file with the outputs to msr.organizers@gmail.com by October 18 2020 at 11:59 PM GMT -12:00, following the specifications detailed below.

System description papers

After receiving the evaluations, if a team decides to make their results public, they are expected to write a paper to describe their system and discuss the results. The papers should be uploaded on the Softconf START conference management system.

Output specification

Teams are supposed to submit a single text file (UTF-8 encoding) per input test set in the format appended below, aligned with the respective input files. All output sentences have to start with the text marker '#text = ', and be preceded by the sentence ID ('#sent_id ='). In other words:

-

#sent_id = [id]

#text = [sentence]

Example:

- #any comment

#sent_id = 1

#text = From the AP comes this story :

#sent_id = 2

#text = President Bush on Tuesday nominated two individuals to replace retiring jurists on federal courts in the Washington area.

A file that contains both the sentence IDs aligned with the test data and the empty '#text' field will be provided to the participants . For null outputs, the '#text = ' field should remain empty.

Please provide both tokenised (for automatic evaluations) and detokenised (for human evaluations) outputs; if no detokenised outputs are provided, the tokenised files will also be used for the human evaluation.

The submissions should be compressed and the name of the .zip folder that you send should include the official team name. As output file names, use the same name as the input file, with the .txt extension: en_ewt-ud-test.conllu -> en_ewt-ud-test.txt. Make sure to keep the tokenised, detokenised, T1a, T1b, T2a and T2b outputs in separate and clearly labeled folders.

Finally, we provide the reference sentences for some of the datasets used in this evaluation; obviously, it is not allowed to use them in any way for generating the outputs.

Number of outputs

We allow one output per system; each team is allowed to submit several different systems, but please avoid submitting variations of what is essentially the same system. We may have to limit each team to a single nominated system for the human evaluations.

History

↑-

In 2018, the first edition of the workshop took place in Melbourne, Australia, co-located with ACL'18. Eight teams participated in the shared task and in the workshop. The proceedings of the workshop with the system descriptions and the task overview and results can be found in the MSR'18 workshop proceedings. In 2019, 14 teams submitted outputs to the challenge and the second edition of the workshop took place in Hong Kong at EMNLP’19; the MSR'19 workshop proceedings can also be downloaded.

A previous pilot surface realisation task had been run in 2011 (Pilot SR'11) as part of the Generation Challenges 2011 (GenChal’11), which was the fifth round of shared-task evaluation competitions (STECs) involving the generation of natural language. The results session for all GenChal’11 tasks was held as an integral part of ENLG’11 in Nancy which attracted around 50 delegates. GenChal’11 followed four previous events: the Pilot Attribute Selection for Generating Referring Expressions (ASGRE) Challenge in 2007 which held its results meeting at UCNLG+MT in Copenhagen, Denmark; Referring Expression Generation (REG) Challenges in 2008, with a results meeting at INLG’08 in Ohio, US; Generation Challenges 2009 with a results meeting at ENLG’09 in Athens, Greece; and Generation Challenges 2010 with a results meeting at INLG’10 in Trim, Ireland.

Contact

- If you have any question or comment, please write to us: msr.organizers@gmail.com .

- The shared task is organised by the Multilingual Surface Realisation workshop committee: Anya Belz, Bernd Bohnet, Thiago Castro Ferreira, Yvette Graham, Simon Mille and Leo Wanner.

References

- Anja Belz, Michael White, Dominic Espinosa, Eric Kow, Deirdre Hogan, and Amanda Stent. 2011. The first surface realisation shared task: Overview and evaluation results. In Proceedings of the 13th European Workshop on Natural Language Generation, ENLG ’11, pages 217–226, Stroudsburg, PA, USA. Association for Computational Linguistics.

- Yvette Graham, Timothy Baldwin, Alistair Moffat and Justin Zobel. 2016. Can Machine Translation Systems be Evaluated by the Crowd Alone? In Journal of Natural Language Engineering (JNLE), Firstview.

- Simon Mille, Anja Belz, Bernd Bohnet, Leo Wanner. 2018. Underspecified Universal Dependency Structures as Inputs for Multilingual Surface Realisation. In Proceedings of the 11th International Conference on Natural Language Generation, 199-209, Tilburg, The Netherlands.

- Simon Mille, Anja Belz, Bernd Bohnet, Yvette Graham, Emily Pitler, Leo Wanner. 2018. The First Multilingual Surface Realisation Shared Task (SR'18): Overview and Evaluation Results. In Proceedings of the 1st Workshop on Multilingual Surface Realisation (MSR), 56th Annual Meeting of the Association for Computational Linguistics (ACL), 1-12, Melbourne, Australia.

- Simon Mille, Anja Belz, Bernd Bohnet, Yvette Graham, Leo Wanner. 2019. The Second Multilingual Surface Realisation Shared Task (SR'19): Overview and Evaluation Results. In Proceedings of the 2nd Workshop on Multilingual Surface Realisation (MSR), 2019 Conference on Empirical Methods in Natural Language Processing (EMNLP), 1-17, Hong Kong, China.

Funding

- (1) Science Foundation Ireland (sfi.ie) under the SFI Research Centres Programme co-funded under the European Regional Development Fund, grant number 13/RC/2106 (ADAPT Centre for Digital Content Technology, www.adaptcentre.ie) at Dublin City University;

(2) the Applied Data Analytics Research & Enterprise Group, University of Brighton, UK; and

(3) the European Commission under the H2020 via contracts to UPF, with the numbers 825079-STARTS (MindSpaces), 786731-RIA (CONNEXIONs), 779962-RIA (V4Design).

Photo by Derek Thomson on Unsplash